Mamba-3 MIMO: Breaking the Transformers Monopoly 🐉

Mamba-3 MIMO: Breaking the Transformers Monopoly 🐉

"One of the first Hugging Face-compatible implementations of the Mamba-3 MIMO architecture."

license: apache-2.0

tags:

- mamba

- mamba3

- ssm

- mimo

- linear-attention

- pytorch

- tilelang

library_name: transformers

pipeline_tag: text-generation

base_model: scratch

model_type: mamba3_custom

Introduction

This is a tiny experimental model built on the Mamba-3 (Multiple-Input Multiple-Output) architecture. Representing the bleeding edge of State Space Models (SSMs) in 2026, this project demonstrates the high-speed inference capabilities of MIMO logic powered by hardware-aware operators.

In a world dominated by $O(N^2)$ Transformers, Mamba-3 offers a linear-complexity $O(N)$ alternative that thrives on modern XPU architectures.

All Files

https://huggingface.co/aifeifei798/Mamba3-MIMO-Tiny-HF/tree/main

🌟 Key Highlights

- Next-Gen Architecture: Utilizes the latest Mamba-3 (MIMO) blocks, significantly enhancing information cross-flow and expression through multi-stream logic.

- Extreme Performance: Integrated with NVIDIA's TileLang JIT compilation technology, featuring CUDA kernel-level optimizations for RTX 30/40/50 series GPUs.

- Industrial Packaging: Stored in

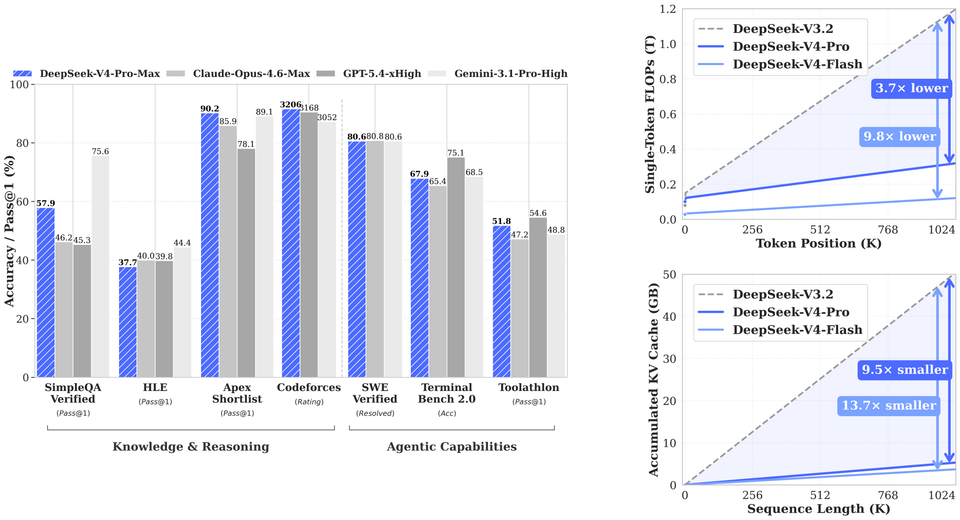

safetensorsformat, fully compatible with the Hugging Facetransformersecosystem viatrust_remote_code=True. - Linear Scaling: Inherits the efficiency of SSMs, where inference speed and memory usage scale linearly with sequence length, eliminating the KV Cache bottleneck.

🛠️ Environment Setup (Compiling from Source)

Mamba-3 utilizes cutting-edge CUDA kernels that are not yet available in standard pip packages. You must build from source to enable the MIMO kernels.

1. Prerequisites

We recommend Python 3.11 or 3.12 (3.13 is supported but experimental).

# Install core dependencies using 'uv' for speed

uv pip install torch torchvision safetensors accelerate transformers

2. Compiling Kernels

Build isolation must be disabled to link correctly with your local CUDA environment.

# Install the causal convolution operator

uv pip install git+https://github.com/Dao-AILab/causal-conv1d.git --no-build-isolation

# Force build Mamba-3 from the official source

MAMBA_FORCE_BUILD=TRUE uv pip install --no-cache-dir git+https://github.com/state-spaces/mamba.git --no-build-isolation

🧪 Training: Adapting for Consumer GPUs

On mobile or consumer GPUs (like the RTX 3060/Laptop HM570), Shared Memory (SRAM) is limited (approx. 64KB). To prevent "Out of Shared Memory" errors, we must carefully tune the state dimensions.

1. "Golden" Parameters for Consumer Hardware

d_model: 256d_state: 64 (Must be a multiple of 32)headdim: 64 (Aligned form_warp * n_warp == 4)mimo_rank: 2 (Balanced parallel flow)chunk_size: 16 (The TileLang alignment unit)

2. The Precision Shield: Hybrid Dtype Strategy

Mamba-3's TileLang kernels require specific parameters to remain in FP32 for numerical stability, while heavy weights are cast to BF16.

def fix_mamba3_dtypes(model):

for name, param in model.named_parameters():

# Sensitive parameters: Bias, dt, A_log, norm, MIMO projections, and D weight

# MUST remain in FP32 to avoid TileLang kernel crashes.

if any(x in name.lower() for x in ["bias", "dt", "a_log", "norm", "mimo"]) or name.endswith(".D"):

param.data = param.data.to(torch.float32)

else:

# Massive weight matrices cast to BF16 for memory efficiency

param.data = param.data.to(torch.bfloat16)

📂 Project Structure & Workflow

This repository represents a complete loop from low-level operator tuning to high-level framework encapsulation.

The Development Stack

1.train_mamba3.py: The "Forge." Trains a tiny Mamba-3 model from scratch using raw tensor training.2.inference_mamba3_native.py: The "Probe." Native inference using.binweights to verify learning.3.finalize_to_safetensors.py: The "Industrializer." Converts raw weights tosafetensorsand auto-generates themodeling_mamba3.pyandconfiguration_mamba3.pyscripts required for Hugging Face.4.hf_load_and_run.py: The "Validator." Demonstrates loading the model viaAutoModelForCausalLMwith a custom dynamic padding generator.

🚀 Rapid Inference with HF

Since Mamba-3 kernels require sequence lengths to be multiples of 16, a custom generation loop is required:

import torch

from transformers import AutoModelForCausalLM, AutoTokenizer

model_id = "aifeifei798/Mamba3-MIMO-Tiny-HF/mamba3_hf_ready"

tokenizer = AutoTokenizer.from_pretrained(model_id)

model = AutoModelForCausalLM.from_pretrained(model_id, trust_remote_code=True, torch_dtype=torch.bfloat16, device_map="auto")

# Custom Dynamic Padding Logic for Mamba-3

def generate_step(model, tokens):

curr_len = tokens.shape[1]

pad_len = (16 - (curr_len % 16)) % 16

model_input = torch.cat([tokens, torch.zeros((1, pad_len), dtype=torch.long).cuda()], dim=1) if pad_len > 0 else tokens

with torch.no_grad(), torch.amp.autocast('cuda', dtype=torch.bfloat16):

logits = model(model_input)

return torch.argmax(logits[:, curr_len - 1, :])

💡 Strategic Insight: Bypassing the Hardware Wall

Why is Mamba-3 a game-changer for the global AI landscape, especially under hardware sanctions?

- "De-CUDA-fication": While we currently use CUDA, Mamba’s logic (SSM/MIMO) is based on structured matrix multiplications. This is highly portable to Google TPUs or Chinese XPUs (Ascend, Moore Threads), breaking the software monopoly of NVIDIA's FlashAttention.

- HBM Optimization: Sanction-compliant GPUs (like the H20) have reduced TFLOPS but high HBM (High Bandwidth Memory). Mamba is a bandwidth-bound architecture, meaning it can achieve much higher effective throughput on "down-spec'd" chips compared to Transformers.

- The End of "GPU Stacking": Transformers require massive clusters to handle long contexts due to quadratic KV Cache growth. Mamba allows small-scale clusters to perform 100K+ context inference, democratizing long-context AI.

📊 Training Stats

- Device: HM570 Laptop (NVIDIA RTX 40/50 Series)

- Optimizer: AdamW (

lr=5e-4for rapid convergence) - Final Loss: 0.0249 (Verified on target knowledge sentences)

Citation

@misc{aifeifei_2026,

author = { aifeifei },

title = { Mamba3-MIMO-Tiny-HF (Revision 6724076) },

year = 2026,

url = { https://huggingface.co/aifeifei798/Mamba3-MIMO-Tiny-HF },

doi = { 10.57967/hf/8135 },

publisher = { Hugging Face }

}

Site: Feimatrix.com

Assistant: Gemini-3-Flash-Preview