Technical Analysis Report: DeepSeek-V4-Pro

1. Architectural Innovation & Specifications

The DeepSeek-V4-Pro architecture is a Sparse Mixture-of-Experts (MoE) model with a heavy focus on inference efficiency and extreme context lengths.

A. Scaling the MoE Framework

- Total Experts (

n_routed_experts): 384. This is a significant jump from DeepSeek-V3 (which had 256). By increasing the expert pool while keepingnum_experts_per_tokat 6, the model achieves higher parameter specialization without a proportional increase in the computational cost per token. - Shared Experts: 1 permanent expert is used to capture common knowledge, reducing redundancy in routed experts.

- Routing Mechanism: The use of

topk_method: "noaux_tc"andscoring_func: "sqrtsoftplus"indicates a transition toward "Auxiliary-loss-free" load balancing, which prevents the performance degradation usually associated with traditional balance losses.

B. Extreme Context & Attention (1 Million Tokens)

- Max Position Embeddings: 1,048,576 tokens. This model is designed for massive document retrieval and long-chain reasoning.

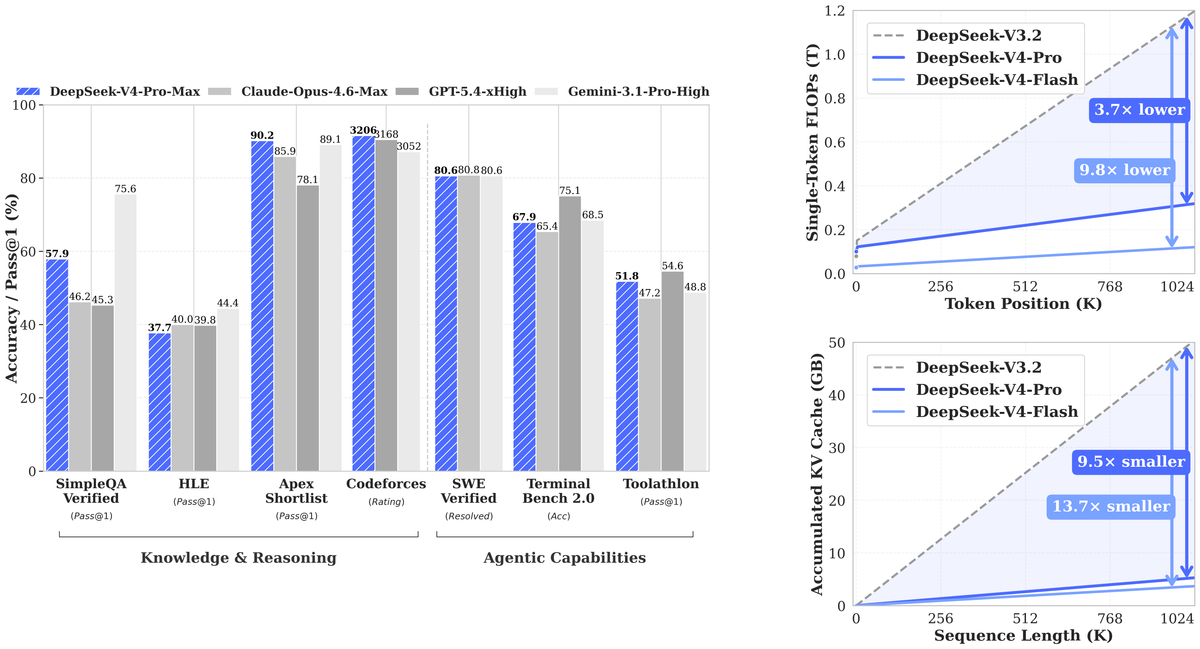

- MLA (Multi-head Latent Attention): Features like

q_lora_rank: 1536andqk_rope_head_dim: 64confirm the use of MLA. This compresses the KV cache significantly, which is the only way to realistically serve a 1M context window. - Hash Layers & Sinkhorn Iterations: The inclusion of

num_hash_layers: 3andhc_sinkhorn_iters: 20suggests a Hash-based Attention or Sinkhorn Distance mechanism. This likely helps the model route information across the 1M token window more efficiently than standard global attention.

C. Multi-Token Prediction (MTP)

num_nextn_predict_layers: 1: Like V3, V4-Pro uses MTP. It predicts the next $n$ tokens simultaneously, which improves training signals and can be used for "speculative decoding" to speed up inference.

2. Hardware-Level Optimization: The FP8 Revolution

The quantization_config reveals a native FP8 (e4m3) implementation with a weight_block_size of [128, 128].

- Native FP8 Training/Inference: DeepSeek is bypassing traditional BF16 limitations. This allows the model to fit into roughly half the VRAM compared to BF16 models of the same size, effectively doubling the capacity of existing GPU clusters.

- Dynamic Scaling: The

activation_scheme: "dynamic"suggests that the model adjusts quantization scales per layer/tensor in real-time, maintaining high precision despite the low bit-depth.

3. Comparison: Domestic Chinese GPUs vs. NVIDIA

DeepSeek-V4 is specifically optimized for the constraints of the Chinese hardware market (restricted access to NVIDIA H100/B200).

Hardware Landscape Comparison

| Feature | NVIDIA B200 (Blackwell) | NVIDIA H200 (Hopper) | Huawei Ascend 910C / Biren BR100 |

|---|---|---|---|

| FP8 Compute | ~9 PFLOPS (with Sparsity) | ~4 PFLOPS | Competitive (910C estimated 2.5-3.5 PFLOPS) |

| Memory Bandwidth | 8 TB/s (HBM3e) | 4.8 TB/s (HBM3e) | 1.2 - 2.5 TB/s (HBM2e/3) |

| Interconnect | NVLink 5 (1.8 TB/s) | NVLink 4 (900 GB/s) | RoCE v2 / Proprietary (Lower than NVLink) |

| DeepSeek V4 Fit | Excellent (Native FP8) | Good (Requires heavy opt) | Primary Target |

Domestic GPU Cost & Performance Analysis

- Cost Advantage: A cluster of Huawei Ascend 910B/C or Biren BR100s is estimated to be 30-50% cheaper in CAPEX than black-market or diverted NVIDIA H100s in China.

- The Interconnect Bottleneck: Chinese GPUs suffer from slower chip-to-chip interconnects compared to NVIDIA's NVLink. DeepSeek-V4-Pro’s MoE architecture (with 384 experts) is a direct response to this. MoE allows for "Model Parallelism" where only a fraction of the model needs to be activated, reducing the massive data transfer requirements between GPUs.

- Software Stack: DeepSeek uses custom Triton kernels and low-level PTX/SASS optimizations. For Chinese GPUs, they likely utilize CANN (Huawei) or HeCuda, allowing them to squeeze 80-90% of theoretical TFLOPS, narrowing the gap with NVIDIA.

4. Power Consumption & Operational Efficiency

DeepSeek-V4-Pro is an "Environmentally Conscious" model due to its high sparsity.

- Sparse Activation: Although the total parameters may exceed 600B+, only 6 experts (plus 1 shared) are active per token. This means the power draw during a forward pass is roughly equivalent to a much smaller ~30B-40B dense model.

- Cooling & Power in China:

- Electricity Cost: In Western China (Ningxia/Inner Mongolia), industrial electricity for AI hubs is roughly $0.05 - $0.07 per kWh.

- Efficiency: Because V4-Pro is optimized for FP8, the "Energy per Token" is significantly lower than V2 or V3.

- Comparison with NVIDIA:

- An NVIDIA B200 rack can pull up to 100kW - 120kW.

- A comparable cluster using Chinese GPUs to run DeepSeek-V4-Pro would likely require 1.5x more physical space and 1.3x more power to achieve the same throughput as a Blackwell cluster, but the Total Cost of Ownership (TCO) remains lower in China due to cheaper land, electricity, and the lower purchase price of domestic silicon.

5. Conclusion: The Strategic Significance

The DeepSeek-V4-Pro configuration is a masterclass in hardware-aware software engineering.

- Independence: It is designed to run at scale on the Huawei Ascend series and other domestic chips by utilizing MoE to hide interconnect latency and MLA to solve memory capacity issues.

- Efficiency: By standardizing on FP8, DeepSeek has effectively doubled the "intelligence per Watt" and "intelligence per Yuan."

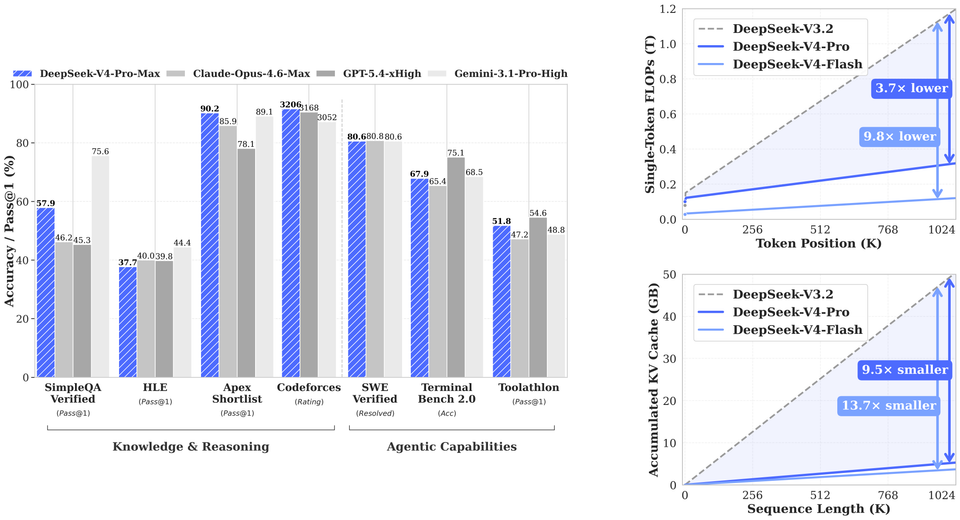

- Capability: The 1-million token context combined with 384 experts suggests this model aims to compete directly with GPT-4o and Claude 3.5 Sonnet, but at a fraction of the inference cost.

Summary Verdict: This model is not just an upgrade; it is a specialized architecture designed to maintain China's AI competitiveness in a resource-constrained (sanctioned) environment. It prioritizes memory compression (MLA) and computational sparsity (MoE) to bypass the "Hardware Gap."